MySQL Performance Tuning Guide

Introduction

Tuning Guidelines

The MySQL performance varies depending on the hardware, operating system (OS), and basic software in use. It is also affected by the design of each subsystem, algorithms used, and compiler settings.

Observe the following guidelines when tuning the performance: The guidelines are as follows:

During performance analysis, analyze system resource bottlenecks from multiple aspects. Poor system performance may not be caused by the system itself but by other factors. For example, high CPU usage may be caused by insufficient memory capacity and the CPU resources may be exhausted by memory scheduling.

Adjust only one parameter of a specific aspect that affects the performance at a time. It is difficult to determine which parameter causes the impact on performance when multiple parameters are adjusted at the same time.

During the system performance analysis, the performance analysis tool also consumes certain CPU and memory resources, which may worsen the resource bottleneck of the system.

Ensure that the program runs properly after performance tuning.

Performance tuning is a continuous process. The result of each tuning should be fed to the subsequent version development.

Performance tuning cannot compromise code readability and maintainability.

Tuning Flow

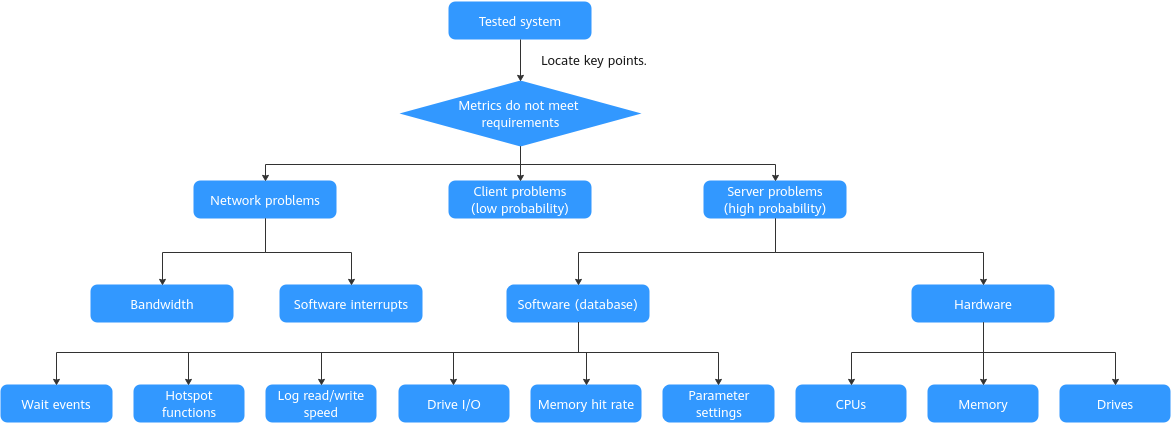

Identify problems, find performance bottlenecks, and determine a tuning method based on the bottleneck level.

The following figure shows the MySQL tuning flow.

The tuning analysis process is as follows:

- In most cases, even before the entire pressure test traffic enters the server, the pressure test result may fail to meet the expectation due to network factors, such as the bandwidth, maximum number of connections, and limit on the number of new connections.

- Check whether the key metrics meet requirements. If not, locate the possible problem causes. The problems may exist on a server (in most cases) or a client (in few cases).

- If the problem cause is on a server, check the hardware metrics such as the CPU, memory, drive I/O, and network I/O metrics. If any abnormal hardware metric is detected, further analysis is required.

- If the hardware metrics are normal, check the database metrics, such as the wait events and memory hit ratio.

- If hardware and database metrics are normal, check the algorithms, buffer, cache, synchronization, and asynchronization. If any abnormal metric is detected, further analysis is required.

Hardware Tuning

Purpose

You can configure advanced BIOS settings for different server hardware to improve server performance.

Method

This method applies to Kunpeng servers. For x86 servers, such as Intel servers, you can retain the default BIOS configurations.

Disable the SMMU (only for the Kunpeng server).

During the server restart, press Delete to access the BIOS, choose Advanced > MISC Config, and press Enter.

Set Support Smmu to Disable.

Note: Disable the SMMU feature only in non-virtualization scenarios. In virtualization scenarios, enable the SMMU.

Disable prefetch.

On the BIOS, choose Advanced > MISC Config and press Enter.

Set CPU Prefetching Configuration to Disabled and press F10.

OS Tuning

Note: Skip this section if the Gazelle tuning method is used.

NIC Interrupt-Core Binding

Purpose

To improve system network performance, you can disable the irqbalance service and manually bind NIC interrupts to dedicated cores to isolate NIC interrupts from service requests.

Method

The optimal number of CPUs for binding interrupts varies depending on the hardware configuration. For example, the optimal number of CPUs for binding interrupts on the Kunpeng 920 5250 processor is 5. You can observe the usage of the five CPUs to determine whether to adjust the number.

The following script is used to set the optimal interrupt binding for MySQL when the Kunpeng 920 5250 processor and Huawei TM280 25G NIC are used. $1 indicates the NIC name, $2 indicates the number of queues (5), and the $3 indicates the bus name corresponding to the NIC, which can be queried by running ethtool -i <NIC_name>:

#!/bin/bash

eth1=$1

cnt=$2

bus=$3

ethtool -L $eth1 combined $cnt

irq1=`cat /proc/interrupts| grep -E ${bus} | head -n$cnt | awk -F ':' '{print $1}'`

irq1=`echo $irq1`

cpulist=(91 92 93 94 95) # Set the cores dedicated for handling interrupts.

c=0

forirq in $irq1

do

echo ${cpulist[c]} "->" $irq

echo ${cpulist[c]} > /proc/irq/$irq/smp_affinity_list

let "c++"

done

Note: If Gazelle is used, you do not need to use the method described in this section.

NUMA Core Binding

Purpose

NUMA core binding reduces cross-NUMA memory access and improves system memory access performance.

Method

Based on the NIC interrupt settings in the previous section, set the NUMA core binding range to the remaining cores (0 to 90) before running the MySQL startup command. $mysql_path indicates the MySQL installation path.

numactl -C 0-90 -i 0-3 $mysql_path/bin/mysqld --defaults-file=/etc/my.cnf &

Note: If Gazelle is used, you do not need to use the method described in this section.

Scheduling Parameter Tuning

Purpose

In high-load scenarios, the CPU utilization cannot reach 100%. In-depth analysis of the scheduling trace of each thread shows that the kernel cannot find a proper process for migration during load balancing. As a result, the CPU is intermittently idle and load balancing fails, wasting CPU resources. You can enable the openEuler STEAL mode to further improve the CPU utilization and system performance. (This feature is available in openEuler 20.03 SP2 and later versions.)

Method

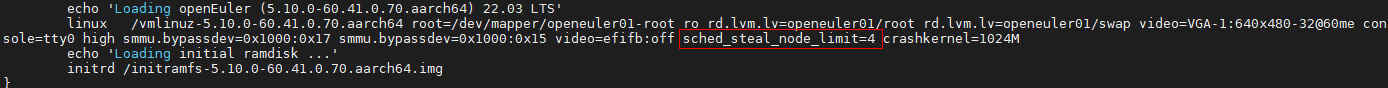

- Add sched_steal_node_limit=4 to the end of the kernel startup items in /etc/grub2-efi.cfg, as shown in the following figure.

After the modification, reboot the system for the modification to take effect.

- Set the STEAL scheduling feature as follows after the reboot:

echo STEAL > /sys/kernel/debug/sched_features

Memory Hugepage Optimization

Purpose

The translation lookaside buffer (TLB) is the high-speed cache in the CPU for the page table that stores the mapping between the page addresses of the virtual addresses and the page addresses of the physical addresses. A higher TLB hit ratio indicates better page table query performance. In a given service scenario, increasing the memory page size can improve the TLB hit ratio and access efficiency, thereby improving the server performance.

Method

Change the memory page size of the kernel.

Run the

getconf PAGESIZEcommand to check the memory page size. If the memory page size is 4096 (4 KB), you can increase the page size by changing the memory page size value of the Linux kernel. You need to recompile the kernel after modifying the kernel compilation options. The procedure is as follows:Run

make menuconfig.Set PAGESIZE to 64K (Kernel Features-->Page size(64KB)).

Compile and install the kernel.

Gazelle Protocol Stack Tuning

Purpose

The deep layers of the native kernel network protocol stack bring high overhead and high system call costs. The Gazelle user-mode protocol stack can be used to replace the kernel protocol stack. By hooking the POSIX interfaces, Gazelle eliminates overheads caused by system calls, thereby greatly improving the network I/O throughput of an application.

Method

- Install the dependency packages.

Configure the Yum source of openEuler and run the yum command to install the dependencies.

yum install dpdk libconfig numactl libboundsheck libcap gazelle

Install the ko file as the root user.

Bind the NIC from the kernel driver to the user-mode driver. Choose one of the following .ko files as required. The MLX4 and MLX5 NICs do not need to be bound to the VFIO or UIO driver.

#If the IOMMU is available

modprobe vfio-pci

#If the IOMMU is not available and the VFIO supports the no-IOMMU mode

modprobe vfio enable_unsafe_noiommu_mode=1

modprobe vfio-pci

#Other cases

modprobe igb_uio

- Bind DPDK to an NIC.

Bind the service NIC (enp3s0 is used as an example) to the driver selected in the previous step to provide an interface for the user-mode NIC driver to access NIC resources.

#Remove the service NIC enp3s0.

ip link set enp3s0 down

#Use vfio-pci

dpdk-devbind -b vfio-pci enp3s0

#Use igb_uio

dpdk-devbind -b igb_uio enp3s0

- Configure memory hugepages.

Gazelle uses hugepage memory to improve efficiency. You can configure any size for the memory hugepages reserved by the system using the root permissions. Select a page size based as required and configure sufficient memory hugepages. By default, 2 GB hugepage memory is configured for each memory node, and the page size is 2 MB.

#Statically allocate 2 MB memory hugepages: 2M * 1024*4 = 8G

echo 8192 > /sys/kernel/mm/hugepages/hugepages-2048kB/nr_hugepages

#View configuration results.

grep Huge /proc/meminfo

- Mount hugepage memory.

To create a directory for the lstack process to access hugepage memory, run the following commands:

mkdir -p /mnt/hugepages-gazelle

chmod -R 700 /mnt/hugepages-gazelle

mount -t hugetlbfs nodev /mnt/hugepages-gazelle -o pagesize=2M

- Use Gazelle for the application.

Use LD_PRELOAD to preload the dynamic library of Gazelle. Use GAZELLE_BIND_PROCNAME to specify the MySQL process name.

GAZELLE_BIND_PROCNAME=mysqld LD_PRELOAD=/usr/lib64/liblstack.so $mysql_path/bin/mysqld --defaults-file=/etc/my.cnf --bind-address=192.168.1.10 &

In the preceding command, the parameter of bind-address is the IP address of the service NIC on the server, which must be the same as the value of host_addr in the Gazelle configuration file.

- Modify the Gazelle configuration file.

TModify the Gazelle configuration file /etc/gazelle/lstack.conf based on the hardware environment and software requirements. The following is an example:

dpdk_args=["--socket-mem", "2048,2048,2048,2048", "--huge-dir", "/mnt/hugepages-gazelle", "--proc-type", "primary", "--legacy-mem", "--map-perfect"]

use_ltran=0

kni_switch=0

low_power_mode=0

listen_shadow=1

num_cpus="18,38,58,78 "

host_addr="192.168.1.10"

mask_addr="255.255.255.0"

gateway_addr="192.168.1.1"

devices="aa:bb:cc:dd:ee:ff"

The --socket-mem parameter indicates the memory allocated to each memory node. The default value is 2048 (MB). In this example, four memory nodes are allocated with 2 GB (2048) memory. The --huge-dir parameter indicates the directory to which hugepage memory is mounted. num_cpus records the IDs of the CPUs bound to the lstack thread. You can select CPUs by NUMA node. The host_addr, mask_addr, gateway_addr, and devices parameters indicate the IP address, subnet mask, gateway address, and MAC address of the service NIC, respectively .

For details, see the Gazelle User Guide

MySQL Tuning

Database Parameter Tuning

Purpose

Modify database parameter settings to improve server performance.

Method

The default configuration file path is /etc/my.cnf. You can use the following configuration file parameters to start the database:

[mysqld_safe]

log-error=/data/mysql/log/mysql.log

pid-file=/data/mysql/run/mysqld.pid

[client]

socket=/data/mysql/run/mysql.sock

default-character-set=utf8

[mysqld]

basedir=/usr/local/mysql

tmpdir=/data/mysql/tmp

datadir=/data/mysql/data

socket=/data/mysql/run/mysql.sock

port=3306

user=root

default_authentication_plugin=mysql_native_password

ssl=0 # Disable SSL.

max_connections=2000 # Set the maximum number of connections.

back_log=2048 #Set the number of cached session requests.

performance_schema=OFF # Disable the performance mode.

max_prepared_stmt_count=128000

#file

innodb_file_per_table=on # Set one file for each table.

innodb_log_file_size=1500M # Set the log file size.

innodb_log_files_in_group=32 # Set the number of log file groups.

innodb_open_files=4000 # Set the maximum number of tables that can be opened.

#buffers

innodb_buffer_pool_size=230G # Set the buffer pool size, which is generally 60% of the server memory.

innodb_buffer_pool_instances=16 # Set the number of buffer pool instances to improve the concurrency capability.

innodb_log_buffer_size=64M # Set the log buffer size.

#tune

sync_binlog=1 # Set the number of sync_binlog transactions to be submitted for drive flushing each time.

innodb_flush_log_at_trx_commit=1 # Each time when a transaction is submitted, MySQL writes the data in the log buffer to the log file and flushes the data to drives.

innodb_use_native_aio=1 # Enable asynchronous I/O.

innodb_spin_wait_delay=180 # Set the spin_wait_delay parameter to prevent system spin.

innodb_sync_spin_loops=25 # Set the spin_loops loop times to prevent system spin.

innodb_spin_wait_pause_multiplier=25 # Set a multiplier value used to determine the number of PAUSE instructions in spin-wait loops.

innodb_flush_method=O_DIRECT # Set the open and write modes of InnoDB data files and redo logs.

innodb_io_capacity=20000 # Set the maximum IOPS of InnoDB background threads.

innodb_io_capacity_max=40000 # Set the maximum IOPS of InnoDB background threads under pressure.

innodb_lru_scan_depth=9000 # Set the number of dirty pages flushed by the page cleaner thread each time.

innodb_page_cleaners=16 # Set the number of threads for writing dirty data to drives.

table_open_cache_instances=32 # Set the maximum number of table cache instances.

table_open_cache=30000 # Set the maximum number of open tables cached in one table cache instance.

#perf special

innodb_flush_neighbors=0 # Check all pages in the extent where the page is located. If the page is dirty, flush all pages. This parameter is disabled for SSDs.

innodb_write_io_threads=16 # Set the number of write threads.

innodb_read_io_threads=16 # Set the number of read threads.

innodb_purge_threads=32 # Set the number of undo page threads to be purged.

innodb_adaptive_hash_index=0

sql_mode=STRICT_TRANS_TABLES,NO_ENGINE_SUBSTITUTION,NO_AUTO_VALUE_ON_ZERO,STRICT_ALL_TABLES

Table 1 Database parameters

| Parameter | Description | Tuning Suggestion |

|---|---|---|

| innodb_thread_concurrency | OS threads used by InnoDB to process the user transaction requests | The default value 0 is recommended, that is, the number of concurrent threads is not limited by default. |

| innodb_read_io_threads | Number of threads that process the read requests in the request queue | Set this parameter based on the number of CPU cores and the read/write ratio. |

| innodb_write_io_threads | Number of threads that process the write requests in the request queue | Set this parameter based on the number of CPU cores and the read/write ratio. |

| innodb_buffer_pool_instances | Number of memory buffer pools. Enable multiple memory buffer pools to hash data to be buffered to different buffer pools. In this way, the memory can be read and written concurrently. | Recommended value: 8 to 32 |

| innodb_open_files | Number of files that can be opened by InnoDB in the innodb_file_per_table mode | A larger value is recommended, especially when there are a large number of tables. |

| innodb_buffer_pool_size | Size of the buffer that caches data and indexes | Recommended value: 60% of the memory |

| innodb_log_buffer_size | Size of the buffer that caches redo logs | Default value: 64 MB. Set this parameter based on the value of innodb_log_wait. |

| innodb_io_capacity | Maximum IOPS of InnoDB background threads | Recommended value: 75% of the total I/O QPS |

| innodb_log_files_in_group | ||

| Number of redo log groups | - | |

| innodb_log_file_size | Size of the redo log file | A larger value is recommended for write-intensive scenarios. However, large size of the redo log file prolongs data restoration. When testing the ultimate performance in the non-production environment, increase the log file size as large as possible. In commercial scenarios, consider the data restoration time when setting this parameter. |

| innodb_flush_method | Method of flushing drives for logs and data. The options include the following:datasync: The data write operation is considered complete when data is written to the buffer of the operating system. Then, the operating system flushes the data from the buffer to drives and updates the metadata of files in drives.O_DSYNC: Logs are written to drives, and data files are flushed through fsync.O_DIRECT: Data files are directly written from the MySQL InnoDB buffer to drives without being buffered in the operating system. The write operation is completed by the flush operation. Logs are buffered by the operating system. | |

| innodb_spin_wait_delay | Polling interval | Set this parameter to a value as long as there is no spin_lock hotspot function. Recommended value: 180 |

| innodb_sync_spin_loops | Number of polling times | Set this parameter to a value as long as there is no spin_lock hotspot function. Recommended value: 25 |

| innodb_spin_wait_pause_multiplier | ||

| Random number used to control the polling interval | Set this parameter to a value as long as there is no spin_lock hotspot function. Default value: 50; recommended value: 25 to 50 | |

| innodb_lru_scan_depth | Number of available pages in the LRU list | Default value: 1024. When testing the ultimate performance in the non-production environment, you can increase the value to reduce the number of checkpoints. |

| innodb_page_cleaners | Number of threads for refreshing dirty data | Set it to the same value as innodb_buffer_pool_instances. |

| innodb_purge_threads | Number of threads for purging undo | - |

| innodb_flush_log_at_trx_commit | 0: writes binlog every second no matter whether transactions are submitted.1: writes the content in the log buffer to drives each time a transaction is submitted. The log files are updated to drives. This mode delivers the best security.2: writes data to the operating system cache each time a transaction is submitted, and the operating system updates data to the drives. This mode delivers the best performance. | When testing the ultimate performance in a non-production environment, set this parameter to 0. |

| innodb_doublewrite | Whether to enable the double write function | When testing the ultimate performance in a non-production environment, set this parameter to 0 to disable double write. |

| ssl | Whether to enable SSL | SSL has great impact on performance. When testing the ultimate performance in a non-production environment, set this parameter to 0 to disable SSL. In commercial scenarios, set this parameter based on customer requirements. |

| table_open_cache_instances | Number of partitions that cache table handles in MySQL | Recommended value: 16 to 32 |

| table_open_cache | Number of tables opened by Mysqld | Recommended value: 30000 |

| skip_log_bin | Whether to enable binlog | When testing the ultimate performance in a non-production environment, add this parameter to the configuration file and disable binlog (add the following content to the configuration file:skip_log_bin#log-bin=mysql-bin). |

| performance_schema | Whether to enable the performance schema | When testing the ultimate performance in a non-production environment, set this parameter to OFF to disable the performance schema. |

Database Kernel Tuning

Purpose

Modify the source code of the MySQL database to improve the database performance. To use the database kernel optimization patch, you need to recompile the database.

Method

MySQL database kernel tuning involves two scenarios: online transaction processing (OLTP) and online analytical processing (OLAP). Different optimization patches are used in different scenarios.

OLTP is a transaction-oriented processing system. It mainly processes small transactions and queries and responds quickly to user operations. For details about the OLTP kernel optimization patch, see MySQL Fine-Grained Lock Tuning.

OLAP analyzes and queries current and historical data and generates reports to support management and decision-making. For details about the OLAP kernel optimization patch, see MySQL OLAP Parallel Optimization.